The International Atomic Energy Agency held its Technical Meeting on Artificial Intelligence and Radiation Protection in Medicine on April 8-10 in Vienna.

At the meeting, Professor Karim Lekadir gave a talk on behalf of the ISR, “When is AI ‘Ready’ for Radiation Protection of Patients?”

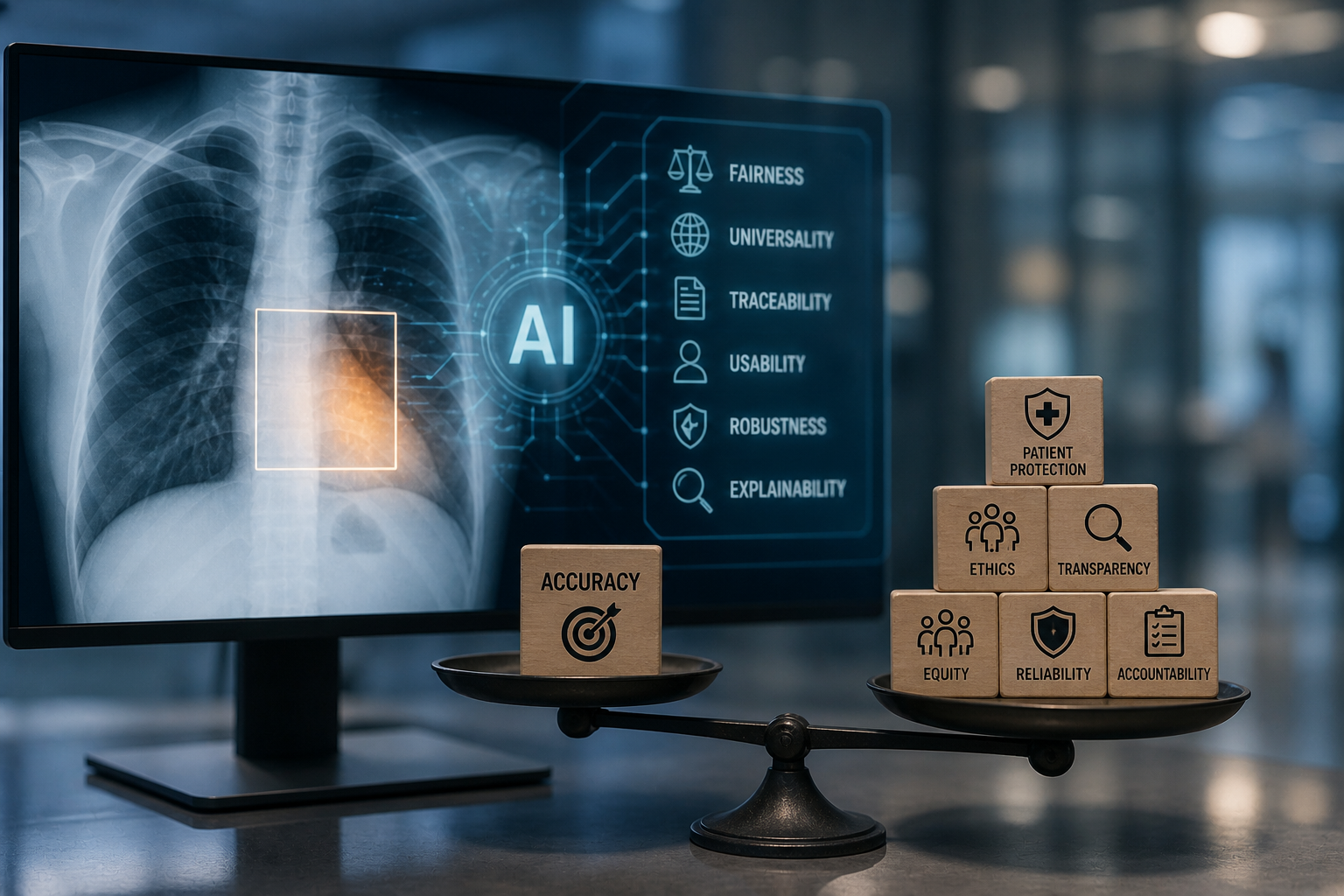

He first introduced a definition of AI readiness based on the FUTURE-AI international guideline, which states that AI systems should be fair, universal, traceable, usable, robust, and explainable. Drawing on examples from radiology AI, he illustrated that systems can achieve high accuracy while still failing in critical aspects such as bias, generalisability, or transparency.

Prof. Lekadir emphasised the need to move from principles to operationalisation, by translating each FUTURE-AI dimension into concrete actions, methods, and evaluation strategies across the AI lifecycle. He highlighted the importance of early stakeholder engagement to co-design the intended use of AI systems, identify application-specific ethical risks (including potential sources of bias and errors), and guide the selection of suitable AI methods, representative datasets, and multi dimensional evaluation plans based on the identified risks.

He then reviewed the current state of AI readiness in radiation protection. Based on examples from CT justification and radiotherapy planning, he noted that while progress has been made, important gaps remain.

- Universality has been investigated but remains limited in LMIC settings. Robustness to variations in imaging conditions has been studied but is not yet routinely implemented.

- Traceability is largely focused on documentation, with limited post-deployment monitoring.

- Explainability has been widely explored but lacks rigorous clinical validation.

- Usability remains limited due to insufficient stakeholder involvement.

- Fairness across population subgroups remains largely under-explored and represents a critical gap.

Prof. Lekadir called for a more holistic approach to AI readiness, moving beyond an accuracy-driven focus towards comprehensive, multidimensional trustworthiness. He emphasised the need for greater investment in AI for radiation protection in LMICs, addressing challenges such as limited infrastructure and workforce shortages, to ensure AI readiness for all populations worldwide.